Lessons about complexity from two infamous fires

The Great Baltimore Fire of 1904 made Standardization the cornerstone of engineering. The recent Palisades Fire challenges that idea.

The systemic role of climate change in ecological disasters like wildfires is well known. Changing environments create the conditions and fuels for increasingly devastating fires that are harder to put out. Warmer temperatures, drier vegetation, and stronger winds create a literal tinderbox for human activities that increase fire risk.

In light of these fires, engineers have designed a system of municipal infrastructure to most effectively respond and mitigate damage. For example, the engineering design of fire hydrants includes a particularly sized outlet to pull volumes of water capable of stopping the fire. The design of this engineering system also includes decisions around the number of outlets on hydrants, the volume of water they can draw from, and the overall system of pressure. The history of organized firefighting is littered with examples of improved engineering design as a result of great conflagrations.

However, one fire in particular changed the discipline of Engineering – with a capital E – forever. I am not talking yet about the 2025 Los Angeles Palisades fire, which I also think, and hope, will change Engineering moving forward. I am talking about another fire that had a significant influence in how engineers design and build things: The 1904 Great Baltimore Fire.

The Great Baltimore Fire of 1904 changed engineering in a profound way. Many firefighters from different cities rushed to help the local fire brigade only to find that their hose connections were incompatible with the ones used in Baltimore. Each city had its own system of firefighting practices and equipment – a paradigm of engineering decentralization and heterogeneity. When thinking about multiple units of firefighters from different cities coming together, like they did for the Great Baltimore Fire, the challenge of integration becomes apparent, but when thinking about each fire department, I would dare to say that their unique systems made sense for their local reality and needs.

This disastrous realization that the available capacity of all fire departments that came to Baltimore was not going to be used due to the incompatibility of their equipments drove sweeping standardization efforts and upgrading of the National Institute of Standards and Technology (NIST), which at the time was known as the National Bureau of Standards. NIST’s directive was to develop standards for commercial materials and products. In this way, the Great Baltimore Fire marked a movement of the engineering discipline into an era in which the integration of complex systems was achieved by standardization.

The Great Baltimore Fire and, more recently, the LA fire are bringing society to a new question for engineering:

How do engineers compose with and live in harmony with Nature, which doesn’t conform to standards and makes life evolve and grow out of diversification? This is in stark contrast to standardization.

This begs another question for Baltimore:

What if, instead of making all fire hose connections the same, engineers could figure out a way to operate a system of hoses with different materials? Standardization, while the most intuitive solution to coordinate systems, is not the only one.

Has the drive for standardization itself become a systemic cause of wildfires and other anomalies of complex systems, broadly construed?

The Great Baltimore Fire and the rise of standards

My wife is working at Johns Hopkins hospital in Baltimore, MD, and I got to spend time with her while setting up her apartment and facilitating the beginning of her new journey.

While in Baltimore, I decided to visit the NIST campus based in Gaithersburg, Maryland. I know several scientists and engineers working there, so I decided to go for lunch with them and learn more about NIST. They invited me to visit the NIST Museum where I learned the origin story of the institute in Baltimore and in the US.

The origin story of NIST goes like this (from the NIST Museum):

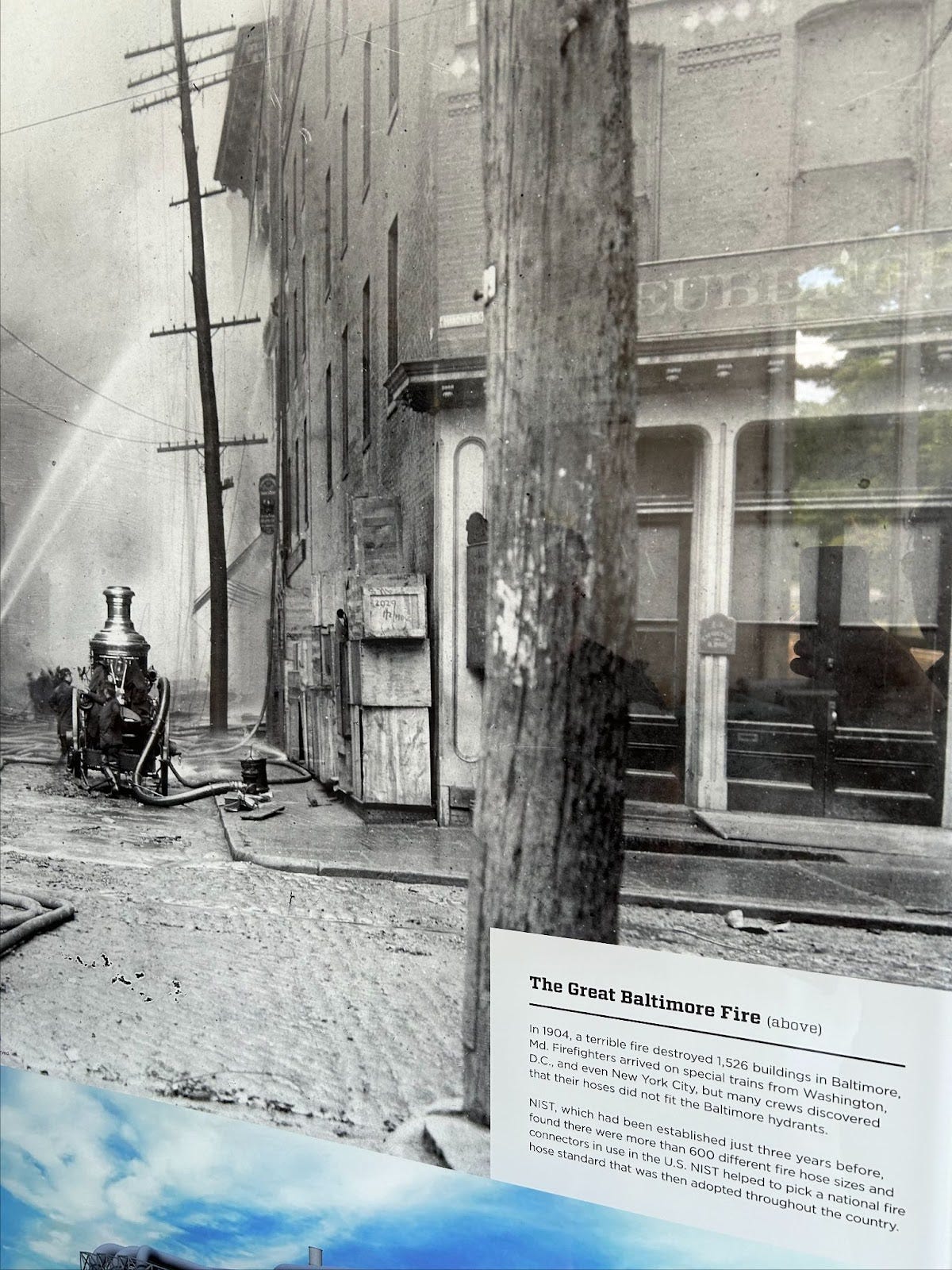

“In 1904, a terrible fire destroyed 1,526 buildings in Baltimore, MD. Firefighters arrived on special trains from Washington DC, and even New York City, but many crews discovered that their hoses did not fit the Baltimore hydrants.

NIST, which had been established just three years before, found there were more than 600 different hose sizes and connectors in use in the US. NIST helped to pick a national fire hose standard that was then adopted throughout the country.”

NIST's response to the problem of coordination of many variations of hoses is one that now dominates or even hegemonized the world of engineering and management today: the centralized management of standards, requirements, and systems. The key for integration and coordination of systems, under this paradigm, is to standardize the interfaces of each system. If all the hoses with the hose-connector were the same, then the problem of coordination would be solved.

The NIST museum is explicit in describing the many benefits this approach has brought to humanity and to the United States. Since the Great Baltimore Fire and the establishment of systems of standardization, like NIST, globalization and the acceleration of progress have been mind blowing. Advancing standardization, they imply, has allowed a diverse and wide amount of industries to enable interoperability, compatibility, repeatability, interchangeability, and the levels of quality required for high precision machinery, all of which have been essential to modern civilization and industries like aviation, transportation, supply chains, medicine, construction, space, mining, energy, communication, entertainment, education and almost everything we see, use, and enjoy in the modern world.

Most systems of governance envisioned today are centralized. This ranges from extreme cases like monarchies and dictatorships, in which control and decision making is completely centralized, to modern democracies in which people have an aggregated voice and are “represented” by a central system of governance. These mechanisms of governance, decision making, and control are inseparable from their function as collective systems. Although centralized systems have historically been an effective way to organize decisions, society has reached the point where centralization and hierarchical methods are less helpful in dealing with complexity.

Today’s political instability is an example where the limitations of largely centralized, hierarchical systems present themselves. It is in these scenarios where systems need an alternative non-standard, non-hierarchical operating model. By our definition, a system cannot be considered collective if its control is centralized and based on standardization. In other words, if a collective system was centralized and hierarchical it would be just another individual system and not a collective, without the need for a new type of science, philosophy, and technology that a collective technology would require.

Despite society’s reliance on centralization, there are several precedents of modern government that have been established without the need for a centralized hierarchical organization. Models such as the United Nations, NATO, UNESCO, World Health Organization, and many others have, while imperfectly, proven to be stable and effective in many dimensions of relevant issues about society and collectivism.

Biting off more that standardization can chew

The complexity of modern socio-technical systems, driven by an increasing dependence on technology, is elevating the need for new formal methods to define integration across disciplines and industries. By describing challenging concepts like ethics, values, morals, and rights in more rigorous and transparent terms, systems can access new forms of collective governance and control. For example, advances in mathematics now make it possible to formally represent viewpoints and compose those viewpoints into a collective with a guarantee that the individual input viewpoints are respected. Because this method is based on the formality of mathematics, it comes with guaranteed correctness via mathematical proof.

The need for technology to help with the formalization and composition of these concepts is higher than ever. In many ways, we are at the dawn of profound technological transformations. The path dependency of technological trajectories is accelerated by demand, popularity, and funding as each technology proves to be useful. Once a technology is launched and released into the world, the cost of adjusting its affordances are significantly higher as adoption is converted into technological momentum and inertia.

In 2023, we were already seeing post-release concerns with the launch of ChatGPT and related large language model technologies. Experts and policy makers have suggested that the risks and concerns these technologies produce can be addressed by adopting frameworks like the AI Containment Problem, in which the focus of design and operation of the technology is containment in a safe space under human supervision; or the AI Alignment Problem which ensures that the goals of the technology align with the goals of humanity.

From the perspective of collective systems, it is naive to believe that alignment (or standardization) of goals can be achieved. In fact, even small “hallucinations” already displayed by the technologies are a lack of alignment in goals with humanity and its users. So far these are not direct catastrophic consequences for humanity, but they certainly represent that a lack of alignment is already present.

In terms of governance and control, bias can become a source of concern. The perspective that each individual system has can be different, allowing bias to act as a source of unsafe scenarios or discordant affordances. Collective networks tend to balance bias by increasing the diversity of perspectives, but this is not a guarantee because biases can also become solidified and augmented by effects like echo-chambers or lack of compositionality.

The lack of centralized governability and control of collective systems present a whole new challenge for humanity: how do we partner in a safe way with technology without having control and hierarchy over it? I believe that in this time of celebration of everything achieved by this approach it is time to ask the question: What could we have done differently in Baltimore? How could we have solved the problem of the 600 hoses without standardizing the connectors?

Asking this question is challenging because standardization has been incredibly successful and helpful. “Don’t fix what is not broken” is a current phrase thrown in the hallways of engineering schools and companies.

But original and innovative thinking requires challenging the core and fundamental paradigms that drive current society. Here we go, making the argument of why there is an urgent need for a new way to deal with complex systems, specifically the variation of components that are required to maintain the health of its structure.

Instead of questioning the fundamental paradigm of standardization, we assume it’s the only way. We carry on—business as usual—unknowingly using a limited worldview. We keep trying to make standardization work, when the reality is that our systems are crying out for something different: something that supports flexibility, resilience, and adaptability. We need a paradigm that lifts us outside of this restricted view. Instead, engineers and business leaders need to think of many perspectives and systems at once and figure out how they fit together. To do that, they must let go of aligning binary labels as the starting point and instead make decisions by assembling a network of interactions and relationships.

This is not a comprehensive argument about the paradigm of standardization, nor a solution or response, but an invitation to have renewed conversations about the future of Engineering and Management. Some arguments to consider when thinking about the limitations of standards to deal with complex systems:

The Great Baltimore Fire included 600 types of fire hoses but one type of fire. The fire, because it was so immediately threatening, sucked all perspective and drove a hyper focus on one aspect of a complex network of systems: the city of Baltimore and the United States in the 1900s.

Standards can be de facto, which means that they emerge naturally by an organic market composed by individuals choosing to adopt them, de jure, which are standards that are legally binding and enforceable, or voluntary. Complex systems operate on top of many voluntary standards not enforceable but pressured by societal and cultural norms, which means that they are vulnerable to swings in cultural values and beliefs. Standards assumes enforceability when this isn’t always the case.

Standards are written by a writer, which means they tacitly convey meaning and the “spirit of the law” which has to be interpreted by a reader, a process that is prone to error, subjectivity and bias.

Standards are not flexible. Once encoded and institutionalized they become part of the structure of a system, with organizations, jobs, and careers built around them. Once a hose connector has been chosen to be the standard it becomes the incumbent. This is why many companies, once they grow to a certain level, become major advocates of regulation and standardization, like the examples of Facebook asking the government to regulate social media. It might look counterintuitive that a player would want to regulate itself, but it is not when realizing that fixing standards around anything elevates the challenge for new players to compete. Designing a new hose connector for fire hoses is not only about proving that it works better than the current ones but that it is so much better that it justifies all fire departments and cities to spend significant money, effort, and time changing them.

Standardization requires organizing systems by membership or labels and ignores relationships, functions, and context. This promotes fixation on details and differences and emphasizes static models. Once we start listing things that are needed for a system to work, we struggle to put them back together and reason about how they become part of the whole. When we decompose something, there’s no need for a particular order or relationship between the parts we list, whereas in composition, the relationships are actually considered to be parts in and of themselves. In other words, when decomposing, we tend to preserve only the “what” and not the “how” of a system.

According to our current biological taxonomy system, it doesn’t make sense to think of, for example, cats and dogs together. These two species, Felis catus Linnaeus and Canis lupus familiaris Linnaeus, don’t share enough common intrinsic properties. To find commonalities, we have to go up a few layers of taxonomy to another class, the order of Carnivora, which then broadens the scope too far, now including lions and polar bears. But if I’m trying to solve a problem that involves any house pet that sheds on their owner’s furniture, this kind of thinking is useless. If I am trying to extinguish a specific type of urban fire the concept of “tools” is not specific enough. Standardization requires a level of specificity that drives our relationship to complex systems to be decomposed and reduced instead of holistic.

There are an infinite number of ways to classify animals based on their contexts, the roles that each creature plays, and their relationships. Yet we continue to classify them using intrinsic characteristics, sacrificing the richness needed to describe them in any other way. Our genetic code, at some point thought to be the map of who we are, has proven not to be a hardwired system that defines our behaviors. Alison Gopnik, professor of psychology and philosophy at UC Berkeley, states that there’s material new evidence that supports the idea that behavior isn’t defined by innate characteristics. This includes new work in epigenetics, Bayesian models of human learning, and increasing evidence for a new model of human cognition. Harvard professor of psychology Steven Pinker supports this notion by stating that behavior depends on the combination of genes and environment.

What about mapping a universal experience of the self? This has been a central topic of philosophy and psychology for decades, if not centuries. Bruce Hood, professor of developmental psychology at the University of Bristol, says that when we default to the self as an explanation for our behavior (e.g., “That’s just the way I am”), we cut off any other possibility for understanding. This may be a useful trick when dealing with the complexity of our relationships with the universe around us; however, this perception of a self is an illusion that’s easily deconstructed. Am I still an artist if I haven’t painted in a couple of years? Am I still an engineer after I retire? Am I still a son after my parents are gone? It’s obvious that we cannot meaningfully define ourselves by internal characteristics alone. It’s our relationships that define us.

Standards fix the context for a system to operate. Interoperability, in this case, requires systems to be accompanied by certain circumstances that are specific for a particular use. Material systems become static and attached to a particular use. There’s a TV show called Guess the Gadget where the host presents random objects and the participants have to guess what they’re used for. This game show is interesting because the contestants don’t know the context for the objects or how they relate to other objects. Trying to identify them using only internal characteristics fails so badly that it makes the show comical. In fact, when the host finally explains a particular device, it’s almost always by using other objects. “This thing is to open a bottle,” she might say. The bottle is necessary to define the bottle opener.

Many believe that the art of engineering is the art of interfaces. It’s true that we can model relationships like this for any number of decisions. If the biology domain produces an output, the hydrology team can take that output and use it as an input. This matches our intuition and seems to make sense. Problem solving and engineering typically consider inputs and outputs with variables that are shared between domains. But here's the problem: we know too much about these domains. Because we know too much, we tend to get stuck on details. We cannot generalize the relationships and interactions in a way that adapts well to change. Therefore, in a counterintuitive way, the more we lean into details and data, the more trapped we become. Engineering of complex systems is not just a problem of interfaces but a problem of adaptability.

The assumptions of intrinsic characteristics that are required to standardize are utopian ones: If only we were able to agree universally on the labels we assign to things, then all problems would be solved. If only we didn’t change those labels without notice. If only we could keep all those labels updated as things changed. These are huge “ifs.” We all know at this point that the only constant is change. Almost as soon as a standard is created, it’s obsolete. Pursuing static definitions is a trap. Furthermore, grouping and assigning memberships is problematic because it assumes that all objects within a group actually share the same characteristics. This drives a type of group thinking that ignores the richness of each object’s uniqueness. In other words, we discard how I actually behave as an individual and consider only the behavior of the group I belong to. This is useful because it allows us to reason about incredibly complex systems, like a society with millions of people, in aggregated ways, but it also supports damaging stereotypes.

Assigning memberships also causes us to discard individual observations as mere anecdotes that don’t necessarily represent the overall system behavior. This trap is what journalist Nicholas G. Carr calls anti-anecdotalism. This is important because it’s an argument about the limitations of data analysis. He explains that rejecting anecdotes pushes science too far away from actual life experience. Rather than claiming that individual behaviors don’t matter, it would be more honest to admit that we don’t have the tools to reason effectively about the millions of individuals in a group.

Today—when we make decisions based on sets—we’re unable to carry conflicting thoughts simultaneously in our heads. Therefore, we choose to instead decompose the problem and focus on only one dimension of it at a time. We work on each component of a system individually. Viewing the world through this narrow lens has predisposed us to think in a way that splinters our perspectives when we desperately need them to converge. Grouping problems into specific buckets inevitably leads to dividing efforts by industries and, even more granularly, by discipline. This is why organizations like Pumps & Pipes in Houston, Texas, are counteracting this by collaborating across multiple different industries with no separation to foster “Innovation for the Benefit of All.”

Standardization drives the opposite of compositionality, as it presupposes that integration can only happen when the interfaces of two systems are compatible in exactly the same way.

It is a plausible argument to state that standardization might be a cause, or at least a contributing factor, for the LA fires of 2025. It has driven a standard type of construction and structure in an ecological system that is ever changing, which has produced material consequences like the destruction of the soil within urban systems due to standard practices of gardens and front yards. Whereas the monocultural effect of standards was previously viewed as an enabler of progress, we’ve reached a point with contemporary complex systems where it’s actually leading to damaging consequences.

Moreover, standardization is not how life operates. The reality of life grows complexity and diversity instead of reducing it. Standardization in this way is a death sentence for life, which, in the case of the LA fire, is missing for containment. The life and diversity of the local ecosystem was traded for the containment and control of neighborhoods.

The LA fire has notably impacted residential neighborhoods, meaning that it is operating within a context in which Nature and engineered infrastructure are interacting in structures and dynamics that are contributing to the catastrophic results that we are all witnessing.

The LA fire and the inflection point towards compositionality

Back to the question posted at the beginning about the Great Baltimore Fire of 1904: What if, instead of making all hose connections equal we could figure out a way to compose different material hoses?

One alternative way to think about how to make two different hoses work together is to have an instrument that transforms from one type of connection to the other one, like an adapter for electric plugs used when traveling internationally. These components are often called “crossovers” because they help cross one type of interface to another in a compatible way.

The challenge of this adapter approach is that we need to know all types of connections beforehand in order to design the adapter. In a way, the adapter doesn’t adapt itself but adapts an incompatible connection in a predefined way. This is possible and feasible in electrical connections as the variations of types of plugs is, while extensive, limited in a way that permits having most alternatives included in common adapters.

But an adapter would quickly render itself useless if there was no way to know the types of connections and conditions that would have to be adapted. Since it is a fixed instrument, its nature is not capable of morphing into something useful in an improvised way. Despite its name, these connections exhibit no real adaptability.

We would need another type of instrument that today doesn’t exist in engineered systems: a truly flexible adapter that maps both ends of the connection as it encounters them. This requires the component updating its own structure and morphology to match the context around it. We will call this fictional device a “mapper”. A mapper is a technology that exists today in the mathematics of category theory but not yet in computational machines and cognitive technologies. Category theory, in simple terms, can be thought of as the mathematics of relationships, which involves composing or mapping between mathematical structures. Leading category theorist, Spencer Breiner, who happens to work at NIST, refers to category theory as the “cartography for the information age.”

Today these maps live only in information systems, famously limited by the fact that “the map is not the territory.” However, to physically connect two components the mapper should also transform its material and phenomenological reality to enable both types of connection. The capability of furnishing the mapper – a mathematical information system – into a phenomenological system, is the key characteristic of the tool that we are calling a Compositioner. The Compositioner connects two previously unknown components without a predetermined adapter or forcing the individual components to align on a standard interface.

The Compositioner, like the mapper, is another fictional technology at the time of writing. Engineers don’t yet have the capability and tools to produce a system that can map and convert itself materially to compose two systems together. Holon is committed to conceiving, developing, and achieving such a compositional technology.

Biological systems are compositioners, capable of truly adapting their own material bodies to come together into relationships, communities, and ecological contexts. They do that by building relationships with their context, which is the opposite that engineering systems tend to do, which is to isolate and separate as much as possible the system from its context, securing its functioning and increasing its reliability by trying to make the system controlled and bounded from its context.

The reasons behind this approach are valuable and important: reliability and predictability are essential for systems like buildings, airplanes, nuclear plants and so on. Life in biological systems, on the other hand, are considered messy, unpredictable, and uncontrollable.

The direction of engineering and infrastructure of modern systems have completely embraced one paradigm in negation of the other one, treating this dichotomy as a paradox in which we have to choose one or the other. The result of this is that our cities are built by removing life – literally – from the soil, construction materials, and context to maintain as much control and predictability as possible. This result is desirable at a certain scale, but it also brings the impact of making the city less adaptable to changing conditions and therefore less resilient and capable of responding to events like the LA fire of 2025.

Talking about the soil under LA in the context of a fire might seem counter-intuitive, as the fire is literally happening above the soil, but the capacity of the building and infrastructure to compose with the soil, air, water, insects, microbiota, and other systems in LA is related to its compositionality and therefore related to its resilience and capacity to deal with fires and natural disasters.

From here, this document is a declaration of Holon’s intentions as we lead a new era of engineering. The new way to coordinate the collectiveness of complex systems, like LA and Nature interacting during a fire, would require:

Adaptability

The problem of standardization is that it needs to know beforehand the conditions and systems, but not knowing what is coming into the relationship is essential for complex compositionality.

The conditions are not fixed but ever changing; context is always pushing for new paradigms, new structures, and new behaviors.

The other unconsidered aspect of the Baltimore Fire of 1904 (but became evident in the LA fire) is that new types of fires are emerging, not just different types of hoses and connections. New types of fires require not only water but other suppressants, other forms of accessing the fire (airplanes), and a different scale of response.

The unprecedented complexity of contemporary systems results in emerging behavior and consequences. An example from the 2025 LA fires was the increase of firefighting airplanes hitting drones. Two unprecedented scenarios – the major fire and historic levels of commercial and public drone use – led to an unsafe scenario that was previously unpredictable.

Another example of emergence from 2025 was the catastrophic loss of water in the hydrants while firefighting in Palisades. The water storage tanks feeding municipal hydrants ran out of water during a critical moment for responders. It was determined that the reservoir that typically supplies water to the city had been drained and shut down for maintenance before the wildfires began.

Skin is an example of a biological system that adapts with temperature, with age, with gains and losses of weights, with sun exposure, and with touch. The core structure of the system is preserved but the actual experience of the structure is changed as it changes its behavior. A composition is a system that will provide adaptability of itself in material structure.

Material Formality

Material formality is a relational feature of the environment, which includes the individual components and the surrounding context. This is in contrast to information as described by Shannon Information Theory.

The physical context of the components and embodied formality contain relational information that specified opportunities for behavior and adaptation (i.e., affordances).

A Compositioner is not a tool for meta-standardization which will make it something that only lives in the information world but materializes in the formality of the physico-chemical affordances of the system. This puts focus on the phenomenological experience of composition rather than fixation on the information about the experience.

A newfound awareness of relational experience is required of the constituent parts for the system to adjust their material structure to compose with the affordances of others. A prerequisite to this is the awareness of the self body experience. In other words, a component won’t know which structural adjustments are needed without the capacity to self-reflect.

Adding formality to material structures and composing through affordances allow constituent parts to come together through an embodied collective. The Compositioner’s orchestration of material adaptive action from the constituent parts leads to a form of embodied collective cognition.

The Compositioner contains relational information that specifies material affordances relative to each component. This relational information is complete and can contain information that specifies affordances. This relational information is not a measurable, quantifiable thing that exists alongside the objects being composed.

A Compositioner is a material technology, which means that it is not an abstraction or an attempt to achieve composition at an information level that would later be translated to the material world, instead, it is phenomenology-based compositionality, and not merely model-based phenomenology. In other words, the composition is achieved by modifying the material phenomena itself.

Agricultural monocultures are more efficient in a few dimensions because the affordances of the natural biological system are violated without any consequence for the perpetrator, and the other dimensions and complexity of the system neglected in favor of a reduced and simplified perspective. One caricature example would be to attempt to maximize the efficiency of an airport runway to allow take-offs by neglecting that airplanes also have to land, in other words, by not respecting that an airport runway only allows one airplane at a time.

The technology of a compositioner would come in the universalization of the formality of an adaptive compositional hardware. Similar to what Information Theory did for information, which is to universalize the symbolic meaning of information to zero and ones, so with a few components you can create many. The technology of DNA and of stem cells is that one can become many. A chair is an example of a fixed affordance materially as an instrument for sitting (relative to humans). A material technology like this one is always affording someone to sit there as a mono function. But if you could build a machine that adapts it could become many things at different times. This is the science fiction part of the compositioner at this point. If the rule can be conveyed by machinery that is adapted, this means the rule could be adapted to whatever the machine wants at each particular moment. The Holon contribution to the world can be summarized as: today we do have machines that convey affordances (they all do), but those affordances are limited ones per object, entangled in a permanent relationship to an object. A stapler staples. We do not yet have machines that can adapt their physio-chemical structure so they can be adapted cognitively to other relationships (beyond biological systems). Bodies changing during pregnancy – adaptability of the internal structure to change the affordance (what do I want to be used for). We don’t observe this today in non-informational engineering technologies, except for scarce exceptions. Machines of information do this b/c their transistors are encoding 0 and 1s.

How does context changing affect the object? What’s the difference between a backpack and trash can? Agree they are attached to one outcome or function. Here we need colimits. The Mapper is the overlap in the colimit. Unique to each relationship of affordances. Backpack and user are nodes. The cognitive technology is the connection between user and backpack. This intermediate node adjusts structure to accommodate the changing structure. The intermediate machinery is the lens that one node uses to observe the other. If it could internally change its structure, the backpack would become – for the observer – something else. The functor is the negotiator we use to see if we can compose through a colimit.

Allowing the couch and the sitter to have instruments of affordances that are universal is a form of cognition as it makes its way through an ever changing universe. We don’t want a Compositioner for each relationship as it would be just a traditional machine. For it to be a collective cognitive technology, it needs to be universal, at least in theory and strive to get there as a discipline of engineering. The journey of Holon products is to start like Turing did – one mapper that is specific to a type of relationships. In our case, Holon Gardens are enacted through their relationship between humans and birds, as an example, through one thing. Our next step is to conceive a machine that would enable worms and birds to relate w/out a need to create a new machine, and progressively continue a journey towards more generality and more composition. The Universal Compositioner is the generalized Mapper. This is the technological innovation and journey of Holon.

We at Holon are intentionally conceiving an overlap, not just revealing them. We believe that we cannot automate this process. You have to create the lens of composition and then by doing that you can do the merge of two different systems. It’s an intentional creation – an act of creation, not discovery, born so that we could compose.

The technology contribution is the object that’s created to allow the parts to compose. In a material composition the parts will decide to create a shared lens.

Compositionality

Category theory provides the theoretical foundation for studying things and processes that compose. Composition refers to putting things together in a system to become a whole based on a fundamental building block of 3 nodes.

Bringing together things that are not originally thought to be together is one aspect of compositionality. The other is that the structure of meaning must be preserved as these nodes come together, meaning that if A->B and B->C then A->C should be coming for free from the relationship of the other two. This mapping from A->C is the composed relationship, and its existence is guaranteed by category theory. In other words, category theory provides the rules to describe composed relationships from their parts.

A compositioner is a technology that maps while preserving meaning of each side of the relationship. We are conceiving a technology that helps us recognize that we are intimately connected to each other and things in our environment, so much so that they are actually a part of us. The Compositioner brings its own set of rules for how to put the pieces together, and each component maps their own piece with its own structure and properties.

The ability to keep every relationship accounted for can only be achieved with the mathematical theory of composition, category theory.

It is not enough to have just the Universal Mapper (e.g., Compositioner). It has to have the property of compositionality. You can achieve the Universality of the Mapper w/out Compositionality but will need it to compose material affordances for the system to be able to handle the complexity of growing the number of individuals in a collective system.

To have material formality means that as the formality of material phenomena increases then Universality of their affordances increases. Formality in the universalization is an independent requirement that is only achieved with compositionality.

Wrapping up

We described how the Great Baltimore Fire of 1904 led to the standardization of fire hose connections and the establishment of NIST, revolutionizing engineering by emphasizing integration through standards. However, we at Holon question whether this standardization approach is adequate for dealing with complex systems, particularly in the context of modern challenges like the 2025 Los Angeles Palisades Fire. We proposes a shift towards compositionality and material formality, away from information-centered engineering, suggesting the development of a new technology that we are calling a "Compositioner", a technology capable of adapting and connecting diverse systems without predetermined standards, drawing inspiration from biological systems and category theory, to better handle the unpredictable and evolving nature of complex situations.

In the LA Fire there were different entities, relating to each other. Today we govern the relationship of those entities by centralizing and standardizing them. We should instead move to a technology that can change and adapt because we don’t know what types of fires and emergencies cities will face in the future. So we need a technology that can embrace and lean into its relationships when they emerge. Because there are, in practice, infinite relationships, the technological revolution is to universalize those compositioners. We are just at the beginning of this journey, but these are the key first steps.

wonderful, so glad you two have a substack!